I was invited to give a keynote at the iSChannel 20th Anniversary celebration at my old university, The London School of Economics and Political Sciences, last week. Here’s my written version of the talk.

It’s great to be here today! I’m here to talk today about these key points:

- AI will have huge impact, but has significant downsides

- It can actively damage democratic societies

- You have the tools for a critical stance – share it!

AI – artificial intelligence – is not intelligent. “AI” is a marketing term, and there’s a significant amount of hype around the technology. At the same time, it does have the potential to change the fabric of work and society. This is a technology that calls out for a critical perspective rooted in the social sciences! My own perspective is, of course, heavily influenced by what I learned at LSE, so I think you as students have everything it takes to develop such a critical perspective.

Hype

When I say AI is hyped, I mean: People over react and ascribe abilities to tools that are not there. This is nothing new. In the 1960s, German-born computing pioneer Joseph Weizenbaum developed a chatbot called ELIZA, which could simulate sessions with a therapist. Except it couldn’t – it was a simple rules-based tool that he created to show the possibilities and limitations of computers. As he put it:

“I wrote a software that’s nothing but theatre… But I know what’s behind the scene. I also know how trivial it is.”

Joseph Weizenbaum – from https://jw.weizenbaum-institut.de/wp03

He was shocked, however, by the response: “test subjects not only engaged in serious conversations with ELIZA, but also confided personal matters to the computer and attributed human qualities such as empathy to it. (…) Even practising psychotherapists announced that the programme could be ‘developed into an almost completely automatic form of psychotherapy’”.

We find this anthropomorphization of technology even today whenever somebody says „Claude says xy…“or „ChatGPT does z…“. It is not “AI” talking, you‘re seeing the output of a networked, sociotechnical system consisting of Data centres, Software, training data, human input etc… The data can be biased, and the humans may have interests that don’t match yours.

So like in Weizenbaum’s times, people ascribe huge potential benefits to AI systems, and fear falling behind if they do not adopt them. This might be especially so in Germany, my home country, as we’re still a bit behind with digitalization. This meme illustrates it:

The first step says „90% of data in PDFs and Excel, uncharted territory, no Internet access in the countryside“ My students are all working full time, and I teach a class where they have to make suggestions on how their company could be better digitalized. It is not unusual for someone from a large company to report that they still use Excel, or even paper, to store their important data. So clearly, their problem is not „lack of AI”, but a lack of smart digitalization, which may or may not include AI. Nevertheless, our minister for digitalization (the first one ever) recently announced he wants to automate 20.000 administrative processes using agentic AI. What could possibly go wrong?

Turns out, quite a lot.

Limits

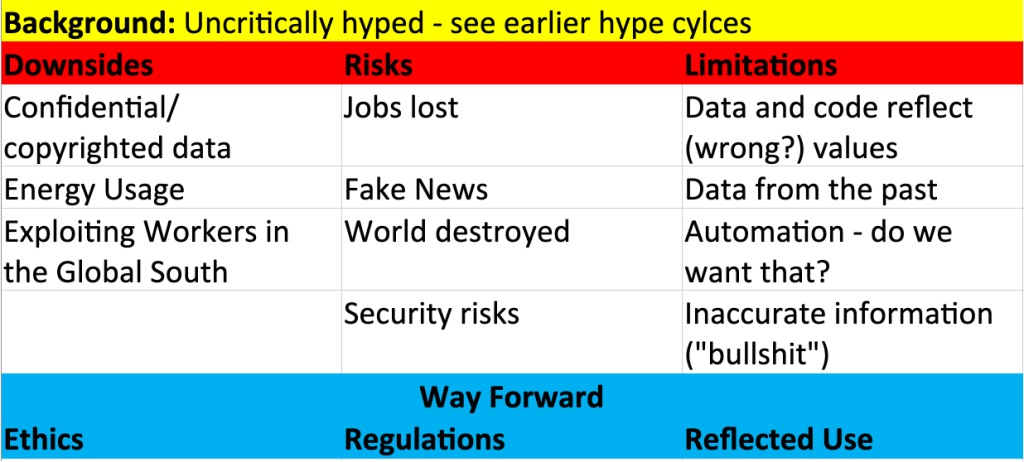

All the hype tends to ignore the risks, downsides and limitations of AI. I collected those in a discussion paper in 2024 (Allwein 2024). Here’s an overview. These range from exploiting workers in the global south, to the loss of jobs, the fact that the data is not neutral and of course „inaccurate information“, hallucinations or bullshit.

I also identified three ways forward to mitigate these: Ethics, Regulations, and Reflected Use of the technology (either not using it, or carefully verifying the output, …). I still think this has aged well – If anything, new risks have arisen since 2023, like the unsustainable business models of most “ai” firms.

But let’s not dwell on details.

More crucially, arguments have been made that “AI” as a technology is surprisingly close to fascism. There’s a small German book written by sociologist Rainer Mühlhoff that translates as “AI and the New Fascism” (Mühlhoff 2025b). He shows that popular AI narratives are based on

- anthropomorphisation

- exaggerated expectations

- technological promises for solving societal problems

…and comments dryly that “These often deviate significantly from the actual state of AI technology development.”

What about fascism? He shows that the worldviews common among tech leaders (think Elon Musk) are surprisingly compatible to 20th-century eugenics and racial theory. Mühlhoff also argues that AI can weaken administrative and democratic processes by making probabilistic decisions, based on potentially biased data. What this means is that people who do not conform to the average might be disadvantaged, while not having any way to appeal such automated decisions. He concludes that using AI in administration is not compatible with the democratic legal system as it does not guarantee a rules based, fair process.

A similar perspective comes from the UK, from Dan McQuillan over at Goldsmiths (McQuillan 2025). He argues that AI is a political technology, and that the net effect of it is to amplify existing inequalities and injustices (he calls it “a colonial technology”), deepening existing divisions. He points to feminist standpoint theory and decolonial critiques as means to work towards structural alternatives.

There are a lot more critical perspectives emerging, and I‘m in the process of reading all about them. Here‘s some more books from my shelf. Notice Mühlhoff, the German sociologist, also published a book in English.

So we have these technologies that are extremely hyped. Organisations feel they have to adapt them for fear of being “left behind” and “losing out in the ai race”. The technologies come with risks, downsides, and limitations. They may be used to weaken democratic institutions and workers’ rights, so some people have associated them with fascism… Which leads us to my favourite question from PhD research seminars:

So what?

It is clear that as academics, we have to take a critical perspective. And as I mentioned, I believe you have all the tools for it already. I already mentioned a lot of the ingredients – let’s put them together:

- Obviously, we should not stop using AI altogether, but we have to use it with guardrails (ethics, regulations, reflected use) and only when needed. As the case of companies still using paper files shows, strategic digitalisation and a digital mindset may be more important than “AI”. Secondly, technology does not solve problems. We need the right technology, correctly implemented in an appropriate organizational context. You know this: the socio-technical view.

- It’s also no surprise for you that questions of power and politics need to be considered. In her classic paper, Lynne Markus mainly talked about resistance to IS implementation, but in a wider sense, we have seen how AI can be an instrument of power, and how it can weaken disadvantaged groups.

- Finally, taking a critical stance is part of this group’s DNA. A lot of the critical positions I mentioned are part of LSE’s, and the IS group’s, tradition. Let’s have more of that in the future!

And this is where you come in: I want you to take a critical stance on AI!

The current time of hype and uncertainty of AI is when critical, socio-technical perspectives like yours are most needed. Go out and talk to others at LSE and beyond, and help them find a balanced view of AI!

I hope you found this interesting and I did not just tell you things you already know. I also hope I inspired some of you to adopt such a critical perspective. Let’s not leave the debate on AI to managers or technologists – we need social science perspectives!

References

- Allwein, F. (2024). ChatGPT – A Critical View. IU Discussion Papers IT & Engineering, 5(1). https://www.researchgate.net/publication/379824818_ChatGPT_-_A_Critical_View

- Bender, Emily M., and Alex Hanna. The AI Con: How to Fight Big Tech’s Hype and Create the Future We Want – Exposing Surveillance Capitalism and Artificial Intelligence Myths in Information Technology Today. Harper, 2025.

- Crawford, Kate. Atlas of AI: Power, Politics, and the Planetary Costs of Artificial Intelligence. Yale University Press, 2021.

- Hao, Karen. Empire of AI: Dreams and Nightmares in Sam Altman’s OpenAI. Penguin Press, 2025.

- McQuillan, D. (2022). Resisting AI: An Anti-fascist Approach to Artificial Intelligence. Bristol University Press.

- Mühlhoff, R. (2025a). The Ethics of AI: Power, Critique, Responsibility. Bristol University Press.

- Mühlhoff, R. (2025b). Künstliche Intelligenz und der neue Faschismus. Reclam.